This is what you should see when you try out the application: So, this will allow you to capture a longer note. Recognition will stop when you hit the stop button. With this option the recognized text will show up at the bottom of the screen as you speak, and you can speak for a while with some longer pauses in between. Since we want users to be able to capture a longer note we will use the Recognize continuously option. Then the sample application provides a few options for you to use. On first use, the application will ask you for the needed application permissions.

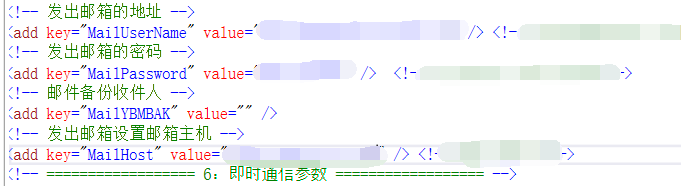

Ideally you run the application on an Android phone since you will need to have a microphone input. You can leave the configuration for intent recognition as-is since we are just interested in the speech to text functionality here.Īfter you have updated the configuration, you can build and run your sample. Private static final String SpeechRegion = "YourServiceRegion" Replace below with your own service region (e.g., "westus"). Private static final String SpeechSubscriptionKey = "YourSubscriptionKey" Replace below with your own subscription key The only thing you need to try out speech recognition with the sample app is to update the configuration for speech recognition by filling in your subscription key and service region at the top of the MainActivity.java source file: This will provide you with the subscription key for your resource in your chosen service region that you need to use in the sample app. In order to use the Azure Speech Service you will have to create a Speech service resource in Azure as described here. This repo also contains similar samples for various other operating systems and programming languages. After cloning the cognitive-services-speech-sdk GitHub repo we can use Android Studio version 3.1 or higher to open the project under samples/java/android/sdkdemo. How to build real-time speech transcription into your mobile app PrerequisitesĪs a basis for our sample app we are going to use the “Recognize speech from a microphone in Java on Android” GitHub sample that can be found here. It's a quick and easy way to get your thoughts out, create drafts or outlines, and capture notes.

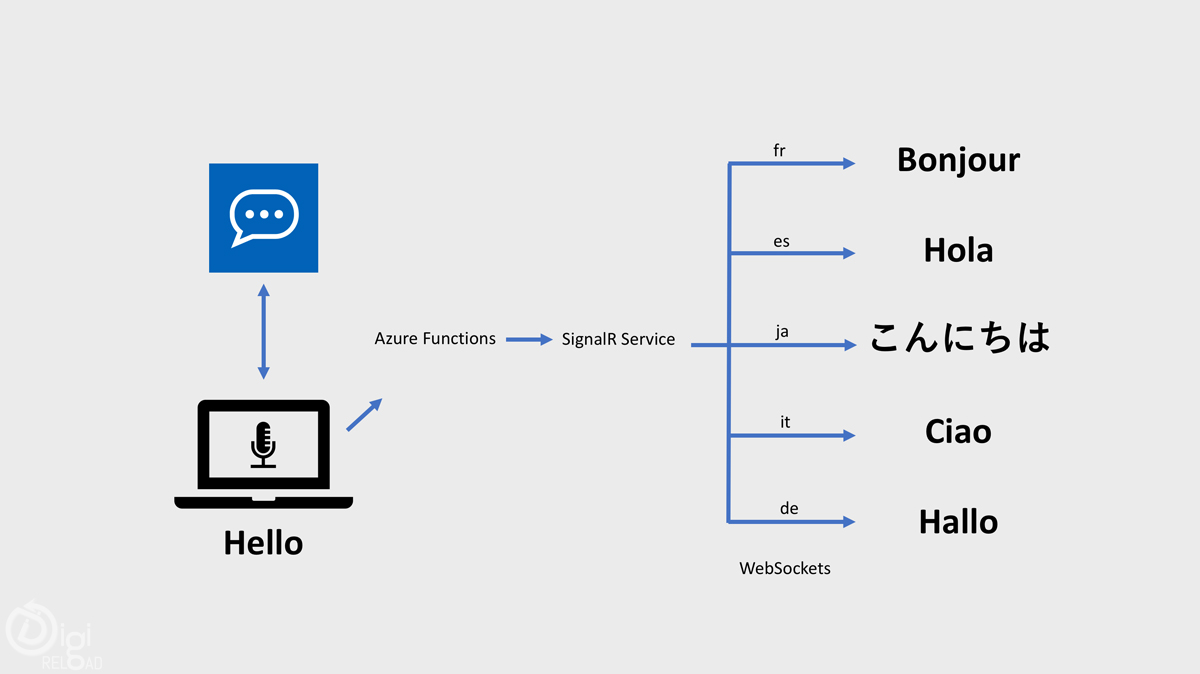

Microsoft Word provides the ability to dictate your documents powered by the Azure Speech Service. This capability is powered by the Azure Speech Service. Microsoft Teams provides live meeting transcription with speaker attribution that make meetings more accessible and easier to follow. Voice assistants using the Speech service empowers developers to create natural, human-like conversational interfaces for their applications and experiences. You can add voice in and voice out capabilities to your flexible and versatile bot built using Azure Bot Service with the Direct Line Speech channel, or leverage the simplicity of authoring a Custom Commands app for straightforward voice commanding scenarios. Gain insights from the interactions call center agents have with your customers by transcribing these calls and extracting insights from sentiment analysis, keyword extraction and more.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed